AI workloads and infrastructure don't behave like traditional cloud infrastructure.

They're driven by model selection, token consumption, routing logic, prompt structure, caching behavior, GPU utilization, retry patterns, and data platform orchestration. These variables directly determine what you spend — and how efficiently AI performs in production.

Most cloud optimization tools were never built to analyze this layer.

PointFive was.

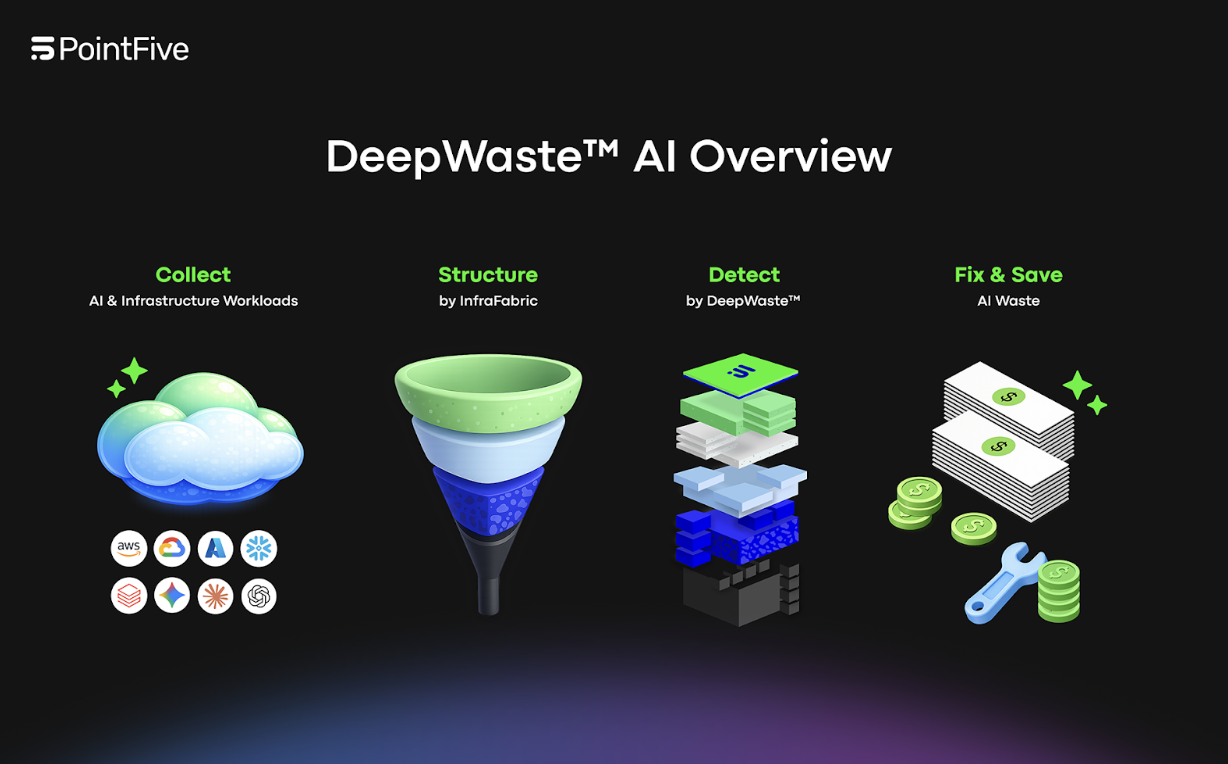

Today, we're introducing DeepWaste™ AI — a standalone, full-stack AI cost optimization module designed to continuously optimize LLM services, GPU infrastructure, and AI data platforms across every major cloud provider.

As organizations move from experimentation to production AI, inefficiency doesn't live in one place. It spans inference behavior, hardware allocation, orchestration design, and data platforms. DeepWaste AI was built to analyze that entire execution stack — as one system.

Full-Stack AI Coverage

DeepWaste AI provides native, agentless connectivity across AWS (Bedrock, SageMaker, AI managed services), Azure (Azure OpenAI, Azure ML, Cognitive Services), GCP (Vertex AI and AI services), and OpenAI and Anthropic direct APIs.

Beyond model services, DeepWaste AI also continuously optimizes GPU infrastructure — identifying underutilized GPUs, instance-type mismatches, idle capacity, and OS or driver configurations that limit throughput.

With native support for Snowflake and Databricks, optimization now extends across AI data platforms as well — completing end-to-end coverage from data ingestion through model inference.

This is not inference-only visibility. It is full-stack AI optimization.

Agentless by Design

DeepWaste AI connects directly to cloud APIs, LLM service metrics, GPU telemetry, and billing systems. No agents. No code changes. No instrumentation. By default, optimization runs using metadata, billing signals, performance metrics, and resource configuration data.

This enables organizations to uncover major structural inefficiencies in model routing, token allocation, caching behavior, retry loops, and hardware provisioning — while preserving privacy and minimizing data access.

Customers stay in control of depth. Optimization adapts accordingly.

How DeepWaste™ AI Detects Waste

DeepWaste AI doesn't analyze AI cost through a single lens. It evaluates inefficiency across models, tokens, infrastructure, and execution behavior simultaneously. Each invocation is structured, enriched, and analyzed across four optimization layers.

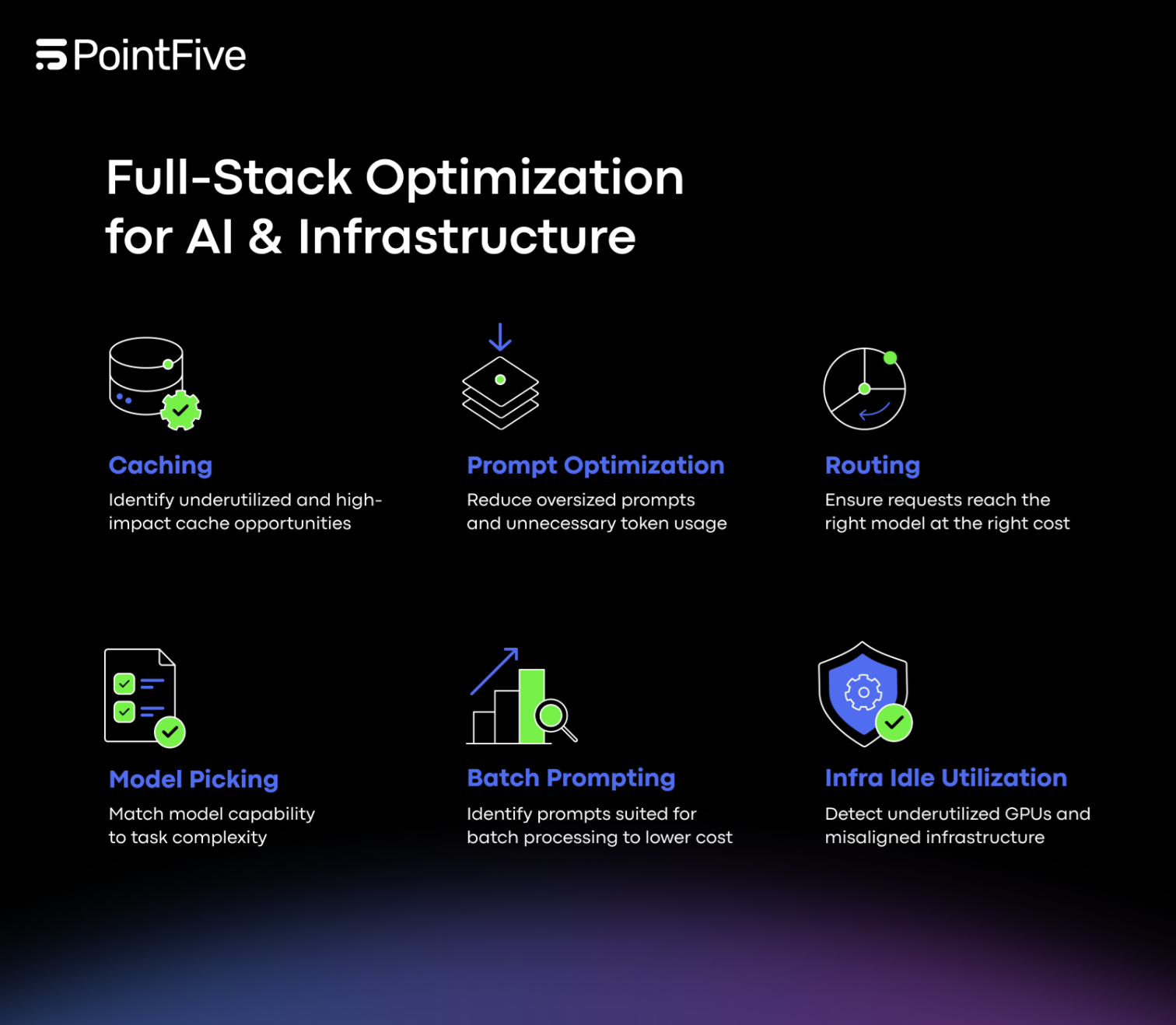

Model & Routing Intelligence — DeepWaste evaluates whether the selected model aligns with task complexity. It detects model-task mismatch and routing downgrade opportunities where smaller, more cost-efficient models can deliver equivalent outcomes. It also surfaces batch vs. real-time routing misalignment and benchmarks similar workloads to detect cost outliers that signal structural inefficiency. This ensures requests reach the right model, at the right cost.

Token & Prompt Efficiency — At the token layer, DeepWaste analyzes input, output, and context allocation patterns. It detects prompt bloat, context window overprovisioning, and output inflation. It flags parameter-task misalignment, such as temperature or sampling settings that increase cost without improving quality. It also correlates token volume with networking impact and surfaces structural token waste patterns that compound at scale. The result: reduced token footprint without sacrificing outcome quality.

Caching & Reuse Optimization — DeepWaste continuously evaluates reuse behavior. It detects duplicate inference requests, identifies underutilized native caching capabilities across providers, and analyzes cache miss patterns that silently inflate LLM spend. This eliminates recomputation and unlocks structural efficiency gains.

Infrastructure & Operational Leakage — Optimization doesn't stop at inference. DeepWaste identifies idle or underutilized GPUs, instance-type mismatches, and OS or driver configurations that limit effective throughput. It surfaces provisioning misalignment between allocated hardware and actual workload demand. Operationally, it detects retry-driven cost inflation, error loops, latency outliers, and execution patterns that drain AI budgets without visibility. Each detection is grounded in unified workload signals, not surface-level billing anomalies.

From Detection to Remediation

DeepWaste AI is not a reporting tool. Every finding includes a quantified savings estimate and clear implementation guidance. Teams understand what to change, why it matters, and what the expected financial impact will be before acting. Optimization moves from visibility to execution. This transforms AI efficiency from a reactive review process into a continuous, measurable discipline across models, infrastructure, and data platforms.

Built for AI at Scale

As AI adoption accelerates, cost visibility alone is insufficient. Efficiency requires understanding how LLM services, GPU infrastructure, and AI data platforms interact — and continuously aligning execution behavior with business value.

DeepWaste™ AI delivers full-stack AI coverage, agentless deployment across providers, privacy-preserving optimization, deep behavioral inefficiency detection, and quantified savings tied directly to remediation.

Most optimization tools were not built for LLM and AI behavior. DeepWaste™ AI was.

Actual waste — found, measured, and remediated — across every AI workload you run.